Build a Load Balancer in Python From Scratch

Round-robin, weighted, and least-connections — three algorithms you configure in nginx every week, now built from zero

You have written this exact config hundreds of times:

upstream backend {

server 10.0.0.1 weight=3;

server 10.0.0.2 weight=1;

server 10.0.0.3 weight=1;

}

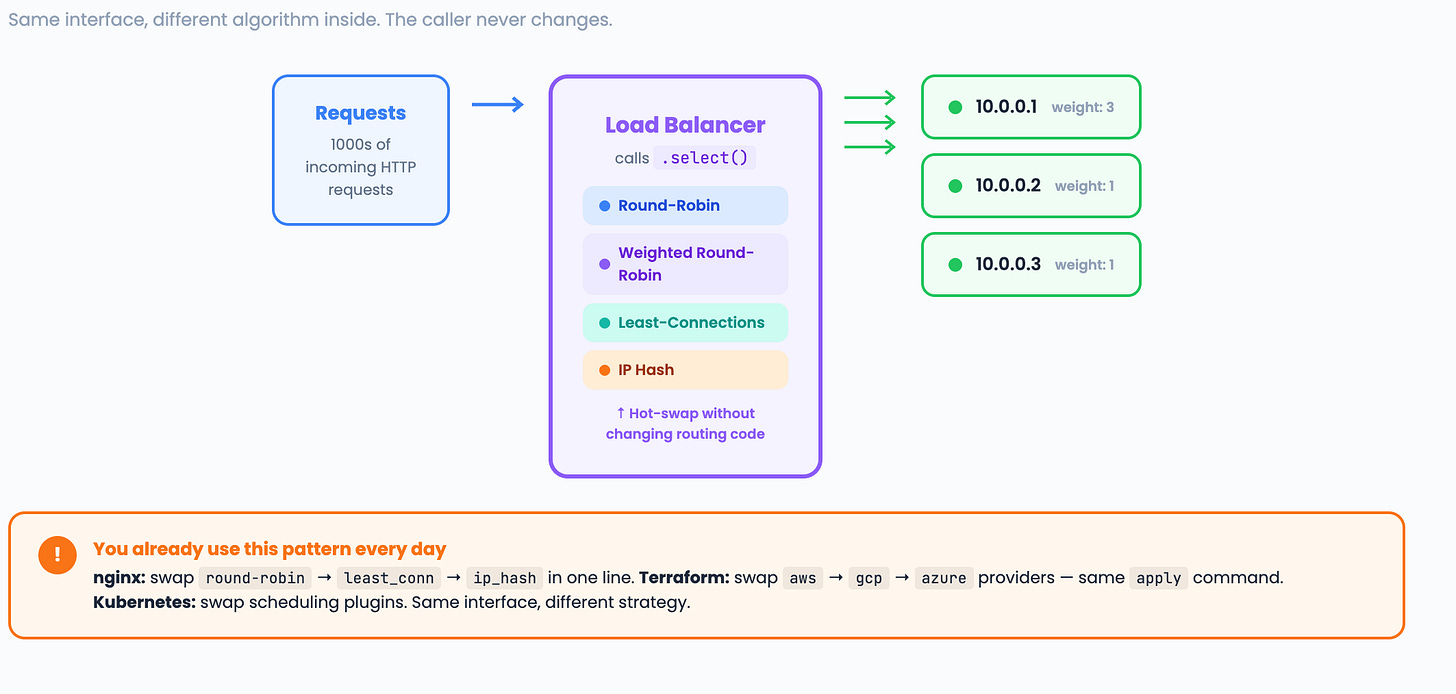

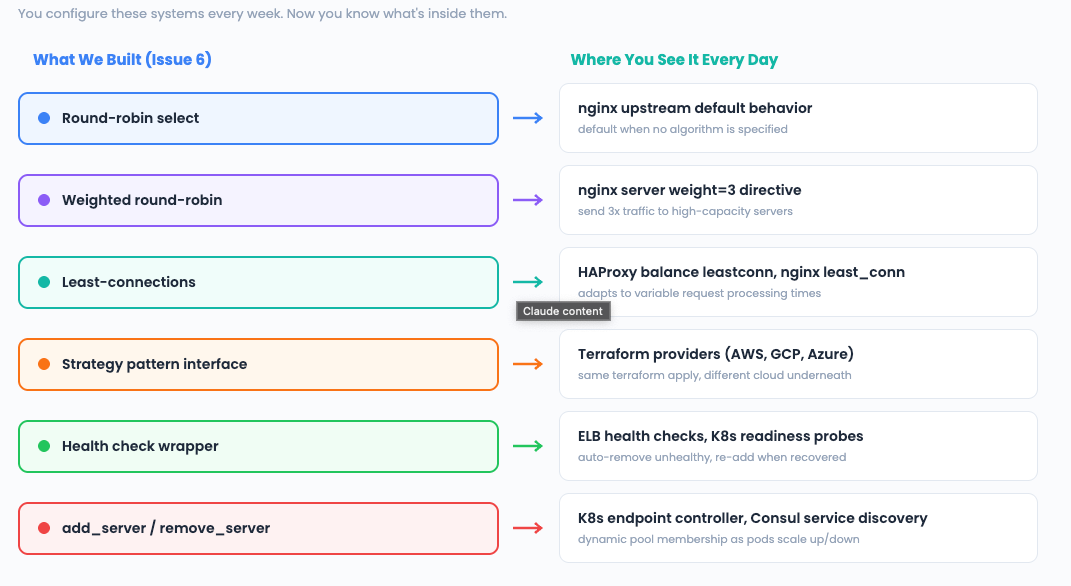

But have you ever built what happens inside that upstream block? Today, we build three load balancing algorithms from scratch and learn the design pattern that lets you swap them at runtime without changing a single line of caller code.

The 3-Question Framework

What does the system DO? It distributes incoming requests across multiple backend servers. This is the Strategy pattern — the load balancer owns a pluggable algorithm that decides which server gets the next request.

You can swap algorithms (round-robin, weighted, and least-connections) at runtime without changing the routing code.

What operations must be FAST? Every single request passes through the load balancer. Selecting the next server must be O(1).

We cannot scan all servers or sort them on every request.

A list + index counter gives us O(1) round-robin.

A pre-expanded list gives us O(1) weighted selection.

A min-heap or linear scan handles the least connections.

What is the core LOGIC? Each algorithm answers the same question differently: “Given N servers, which one should handle this request?”

Pattern: Strategy (swap algorithms at runtime)

Structure: list + counter (round-robin), expanded list (weighted), heap (least-conn)

Algorithm: Stateless rotation, weighted expansion, connection-aware selection

The Strategy Pattern: Why It Matters Here

Before we write any load-balancing code, let us build the pattern that holds it all together. The Strategy pattern is one of the most useful patterns in system design interviews — and one you already use daily.

from abc import ABC, abstractmethod

class LoadBalancer(ABC):

"""Base class — all algorithms implement this interface."""

@abstractmethod

def select(self) -> str:

"""Return the address of the next server to handle a request."""

pass

@abstractmethod

def add_server(self, address: str, weight: int = 1) -> None:

pass

@abstractmethod

def remove_server(self, address: str) -> None:

pass

Every algorithm we build will implement this interface. The caller never knows which algorithm is running, and it just calls select() and gets a server address. This is exactly how nginx, HAProxy, and Kubernetes Services work internally.

Interview phrasing: “I would use the Strategy pattern so we can swap load-balancing algorithms without changing the routing code. This is the same pattern behind Terraform providers — same interface, different implementation.”

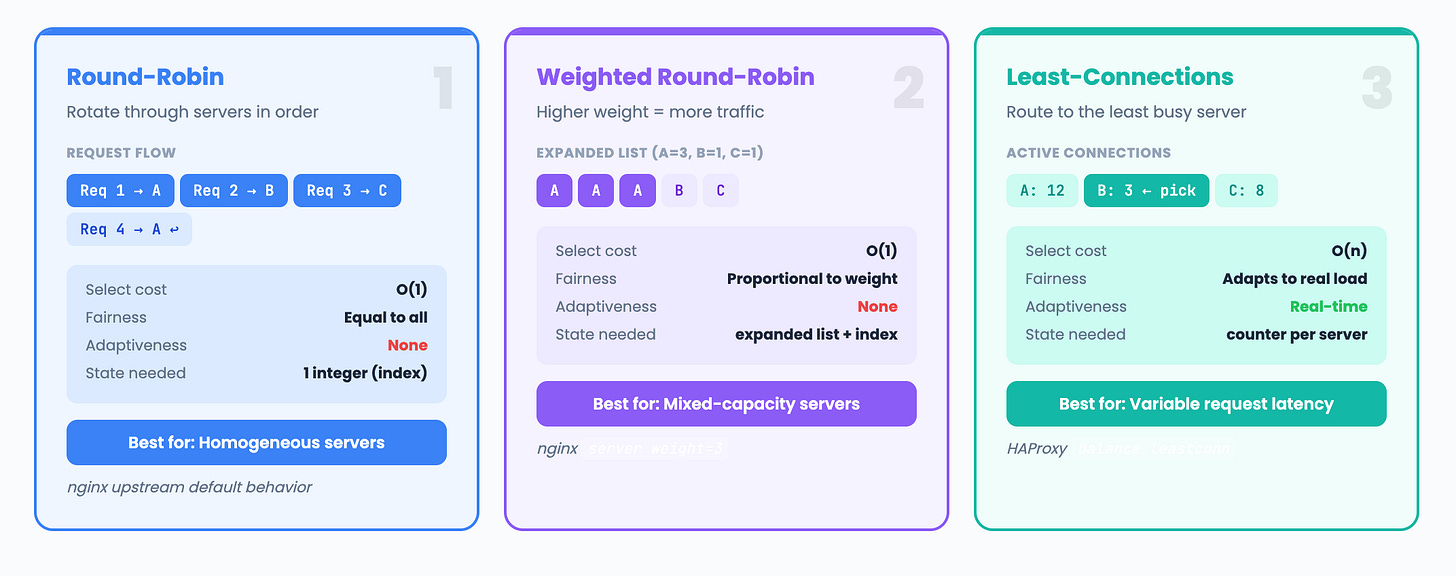

Algorithm 1: Round-Robin

The simplest approach. Rotate through servers in order. Every server gets equal traffic.

import threading

class RoundRobinBalancer(LoadBalancer):

"""Distribute requests evenly across all servers in rotation."""

def __init__(self):

self._servers = []

self._index = 0

self._lock = threading.Lock()

def add_server(self, address: str, weight: int = 1) -> None:

with self._lock:

if address not in self._servers:

self._servers.append(address)

def remove_server(self, address: str) -> None:

with self._lock:

if address in self._servers:

self._servers.remove(address)

if self._index >= len(self._servers):

self._index = 0

def select(self) -> str:

with self._lock:

if not self._servers:

raise RuntimeError("No servers available")

server = self._servers[self._index]

self._index = (self._index + 1) % len(self._servers)

return server

Complexity: O(1) select. O(n) add/remove (list operations), but these are rare.

Request 1 → Server A

Request 2 → Server B

Request 3 → Server C

Request 4 → Server A (wraps around)

Request 5 → Server B

...

When to use: All servers have equal capacity. Simple, predictable, stateless.

When it fails: If server A is a 32-core machine and server B is a 2-core machine, round-robin sends them equal traffic. Server B collapses.

Algorithm 2: Weighted Round-Robin

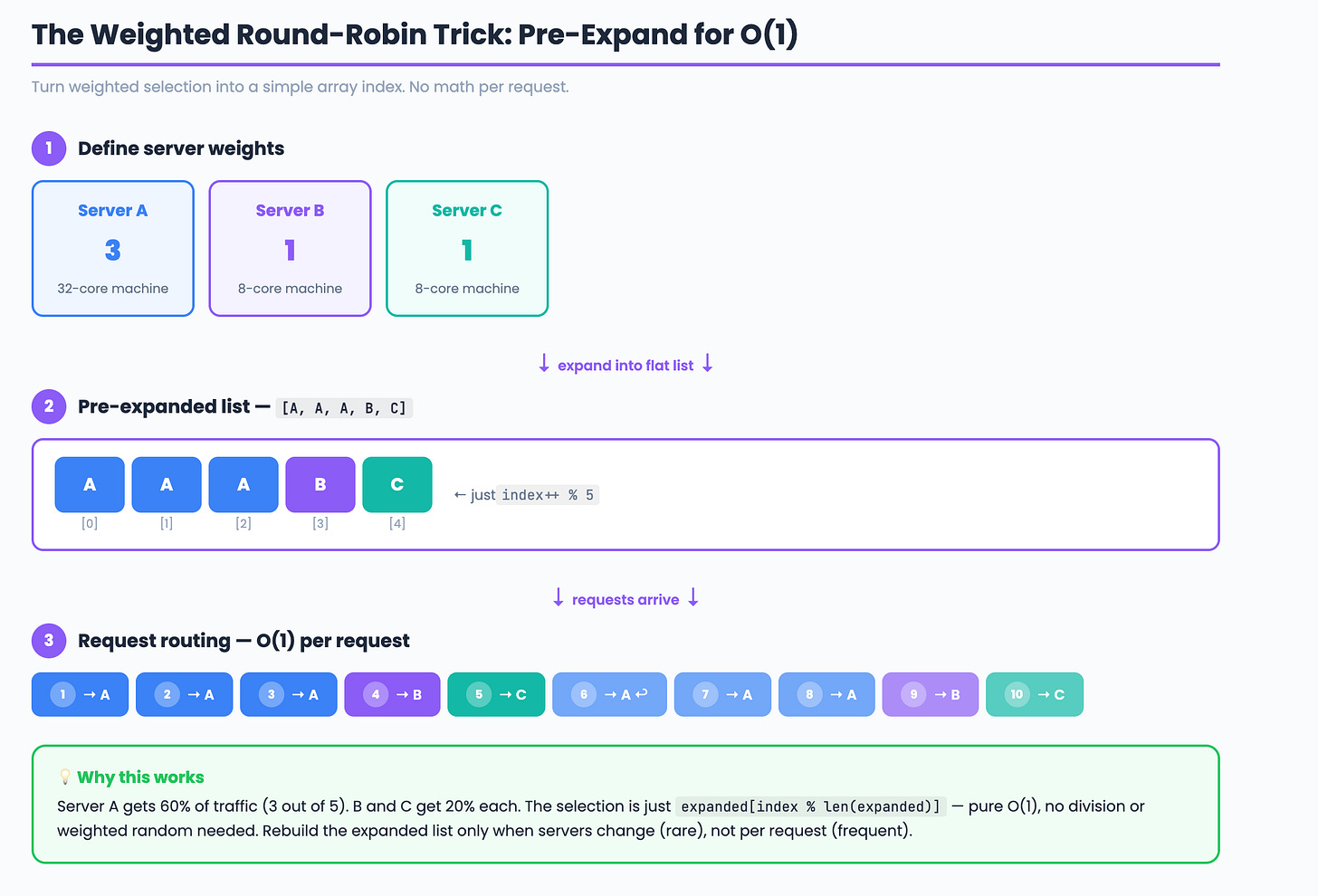

Assign weights to servers. Higher-weight servers get proportionally more traffic.

class WeightedRoundRobinBalancer(LoadBalancer):

"""Servers with higher weight receive more requests."""

def __init__(self):

self._servers = {} # address -> weight

self._expanded = [] # pre-expanded list for O(1) select

self._index = 0

self._lock = threading.Lock()

def add_server(self, address: str, weight: int = 1) -> None:

with self._lock:

self._servers[address] = weight

self._rebuild()

def remove_server(self, address: str) -> None:

with self._lock:

self._servers.pop(address, None)

self._rebuild()

def _rebuild(self):

"""Expand weights into a flat list for O(1) selection."""

self._expanded = []

for address, weight in self._servers.items():

self._expanded.extend([address] * weight)

self._index = 0

def select(self) -> str:

with self._lock:

if not self._expanded:

raise RuntimeError("No servers available")

server = self._expanded[self._index]

self._index = (self._index + 1) % len(self._expanded)

return server

Complexity: O(1) select. O(total_weight) rebuild on add/remove.

The trick: Pre-expanding weights into a flat list turns weighted selection into a simple array index. No math per request.

When to use: Servers have different capacities. This is the weight=3 in your nginx upstream config.

Algorithm 3: Least-Connections

Route to the server with the fewest active connections. The most adaptive approach.

class LeastConnectionsBalancer(LoadBalancer):

"""Route to the server with the fewest active connections."""

def __init__(self):

self._connections = {} # address -> active connection count

self._lock = threading.Lock()

def add_server(self, address: str, weight: int = 1) -> None:

with self._lock:

if address not in self._connections:

self._connections[address] = 0

def remove_server(self, address: str) -> None:

with self._lock:

self._connections.pop(address, None)

def select(self) -> str:

with self._lock:

if not self._connections:

raise RuntimeError("No servers available")

# Find server with minimum connections

server = min(self._connections, key=self._connections.get)

self._connections[server] += 1

return server

def release(self, address: str) -> None:

"""Call when a request completes."""

with self._lock:

if address in self._connections:

self._connections[address] = max(0, self._connections[address] - 1)

Complexity: O(n) select (scans all servers). For small server counts (typically <100), this is negligible. For massive pools, use a min-heap.

Server A: 12 active connections

Server B: 3 active connections ← selected

Server C: 8 active connections

New request → B (fewest connections)

B now has 4 active connections

When to use: Requests have variable processing time (some take 10ms, some take 10s). Round-robin would overload a server stuck on slow requests. Least-connections adapts in real time.

The release() pattern: The caller must notify the load balancer when a request completes. This is how HAProxy’s leastconn and Kubernetes’ endpoint tracking work.

Putting It Together: Swap Algorithms at Runtime

# Start with round-robin

balancer: LoadBalancer = RoundRobinBalancer()

balancer.add_server("10.0.0.1")

balancer.add_server("10.0.0.2")

balancer.add_server("10.0.0.3")

# Handle requests

for request in incoming_requests:

server = balancer.select()

forward(request, server)

# Hot-swap to weighted — no caller changes needed

balancer = WeightedRoundRobinBalancer()

balancer.add_server("10.0.0.1", weight=3)

balancer.add_server("10.0.0.2", weight=1)

balancer.add_server("10.0.0.3", weight=1)

# Same loop works — the interface is identical

for request in incoming_requests:

server = balancer.select()

forward(request, server)

This is the power of the Strategy pattern. The routing code never changes. Only the algorithm inside the select() call changes.

Terraform uses this exact pattern — terraform apply calls the same interface whether the provider is AWS, GCP, or Azure.

Adding Health Checks

A production load balancer needs to detect failed servers. Here is a simple health check wrapper:

import time

import threading

import urllib.request

class HealthCheckedBalancer:

"""Wraps any LoadBalancer with periodic health checks."""

def __init__(self, balancer: LoadBalancer, check_interval: float = 5.0):

self._balancer = balancer

self._all_servers = {} # address -> health path

self._check_interval = check_interval

self._start_health_checks()

def add_server(self, address: str, health_path: str = "/health"):

self._all_servers[address] = health_path

self._balancer.add_server(address)

def select(self) -> str:

return self._balancer.select()

def _check_health(self):

for address, path in list(self._all_servers.items()):

try:

url = f"http://{address}{path}"

urllib.request.urlopen(url, timeout=2)

self._balancer.add_server(address) # re-add if healthy

except Exception:

self._balancer.remove_server(address) # remove if unhealthy

def _start_health_checks(self):

def run():

while True:

time.sleep(self._check_interval)

self._check_health()

threading.Thread(target=run, daemon=True).start()

Interview phrasing: “I would wrap the load balancer with a health check decorator. A background thread pings each server’s /health endpoint every 5 seconds.

Unhealthy servers are removed from the pool. When they recover, they are automatically re-added. This is how ELB health checks and Kubernetes readiness probes work.”

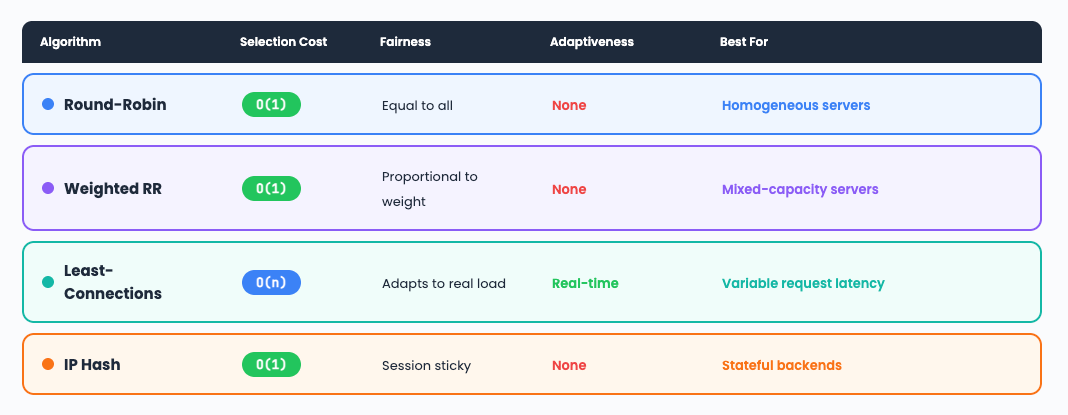

Comparison: When to Use What

The DevOps Connection

Interview Walkthrough Script

When the interviewer asks, “Design a load balancer”:

“I would use the Strategy pattern with a pluggable algorithm behind a common interface. The default would be weighted round-robin — pre-expand weights into a flat array for O(1) selection.

For variable-latency workloads, I would swap to least-connections, which routes to the server with the fewest active requests. I would wrap the balancer with health checks — a background thread pinging /health endpoints, automatically removing failed servers and re-adding recovered ones.

For session affinity, I would add an IP hash strategy. The key design decision is that all strategies implement the same interface, so the routing layer never changes when you swap algorithms.”

Challenge: Try It Yourself

IP Hash. Implement a strategy that hashes the client IP to consistently route the same client to the same server. Hint:

hash(ip) % len(servers).Weighted least-connections. Combine weights with connection counts:

score = connections / weight. The server with the lowest score wins.FastAPI integration. Build an HTTP reverse proxy that accepts requests and forwards them to backends using your load balancer. Use

httpxfor async forwarding.

Next week: Build a DNS Resolver in Python

Previous: Build a Rate Limiter | The GIL Explained | TTL & Key Expiry