Build Your Own Redis in Python: Key-Value Store From Scratch

Why every DevOps engineer already understands Redis internals — and how to prove it in a coding interview

You’ve typed redis.set("session:abc", token) a thousand times. You’ve configured redis.conf, set up replication, monitored memory with INFO, and debugged eviction policies at 2 AM.

But if an interviewer asks you to build a key-value store from scratch in Python, can you do it?

Most DevOps engineers freeze here — not because they don’t understand the concepts, but because they’ve never translated their operational knowledge into code. This issue fixes that.

By the end, you’ll have a working key-value store in Python that supports get, put, delete, and TTL-based expiry. More importantly, you’ll understand exactly which design pattern and data structure powers it — and how to explain your choices in an interview.

The 3-Question Framework: How to Approach Any System Design Problem

Before writing a single line of code, train yourself to ask three questions. This framework works for every system design problem you’ll face in an interview:

Question 1: What does the system DO? → This reveals the design pattern.

A key-value store sits between a client and data storage. It intercepts requests, stores data, and returns it on demand. That’s the Proxy pattern — the same pattern behind Nginx reverse proxying, CDN caching, and API gateways. You already use proxies daily; now you’re building one.

Question 2: What operations must be FAST? → This reveals the data structure.

A key-value store needs O(1) lookup, O(1) insert, and O(1) delete. There’s exactly one data structure that gives you all three: a hash map. In Python, that’s a dict.

Question 3: What’s the core LOGIC? → This reveals the algorithm.

For basic get/put, it’s straightforward hash map operations. For TTL (time-to-live), we need lazy expiration — check if a key has expired only when someone tries to access it. This is exactly how Redis handles expiry in practice.

System: Key-Value Store

Pattern: Proxy (sits between client and data)

Data Structure: dict (hash map) — O(1) for get, put, delete

Algorithm: Lazy expiration for TTL

Memorize this framework. It works for every system design question.

Step 1: The Simplest Key-Value Store (10 Lines)

Let’s start with the absolute minimum. This is what you’d write in the first 2 minutes of an interview:

class KeyValueStore:

def __init__(self):

self._store = {}

def put(self, key, value):

self._store[key] = value

def get(self, key):

return self._store.get(key)

def delete(self, key):

return self._store.pop(key, None) is not None

def keys(self):

return list(self._store.keys())

That’s it. A working key-value store. The _store prefix signals it’s a private implementation detail — a Pythonic convention interviewers notice.

But an interviewer will immediately ask: “What about expiry? What happens when keys should expire after a certain time?”

Step 2: Adding TTL (Time-To-Live) — Lazy Expiration

This is where it gets interesting. Redis supports SETEX and EXPIRE commands that make keys auto-delete after a timeout. Let’s implement the same thing.

The trick is lazy expiration: don’t actively scan for expired keys. Instead, check the expiry timestamp when someone accesses the key. If it’s expired, delete it and return None.

import time

class KeyValueStore:

def __init__(self):

self._store = {} # key → {"value": ..., "expiry": timestamp | None}

def put(self, key, value, ttl_seconds=None):

expiry = time.time() + ttl_seconds if ttl_seconds else None

self._store[key] = {"value": value, "expiry": expiry}

def get(self, key):

if key not in self._store:

return None

entry = self._store[key]

# Lazy expiration: check on access

if entry["expiry"] and time.time() > entry["expiry"]:

del self._store[key]

return None

return entry["value"]

def delete(self, key):

return self._store.pop(key, None) is not None

def keys(self):

now = time.time()

return [

k for k, v in self._store.items()

if v["expiry"] is None or now <= v["expiry"]

]

Why lazy expiration?

Redis actually uses both lazy and active expiration:

Lazy: Check TTL on every

GET. If expired, delete and return nil. This is what we implemented above.Active: A background job randomly samples 20 keys, deletes expired ones, and repeats if more than 25% were expired.

In an interview, implementing lazy expiration is enough. But mentioning that Redis combines both approaches shows depth — and that’s what separates a “pass” from a “strong hire.”

Step 3: Making It a Real Server With FastAPI

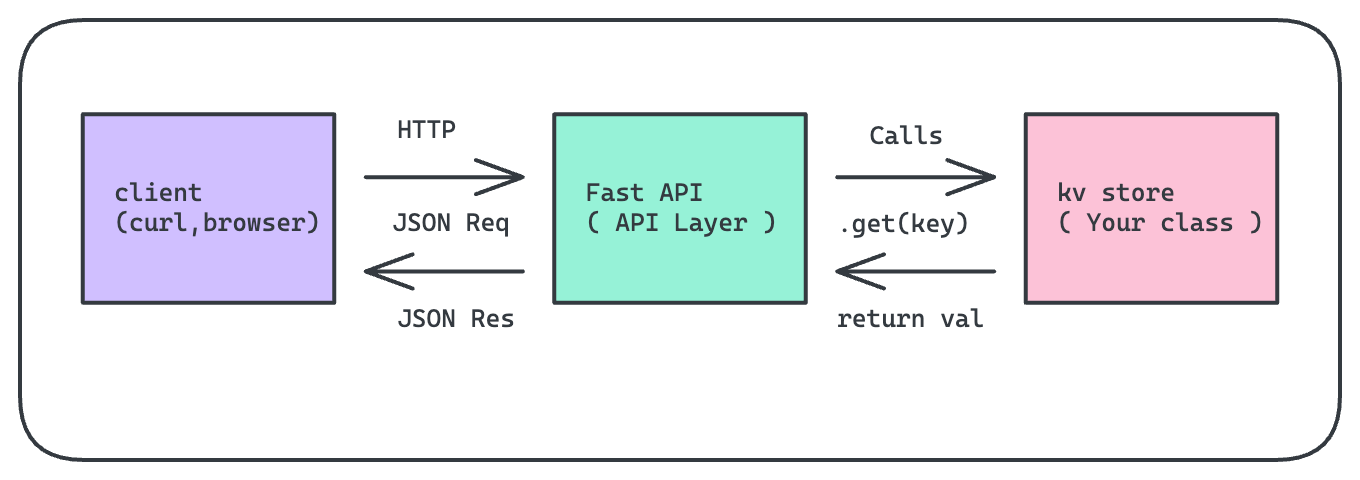

Your Python class is the engine. But how does a browser, CLI tool, or another service actually use it? Through an API layer. This is the piece most tutorials skip, and it’s the piece that clicks everything together:

from fastapi import FastAPI, HTTPException

from pydantic import BaseModel

app = FastAPI()

store = KeyValueStore() # lives in memory

class PutRequest(BaseModel):

value: str

ttl_seconds: int | None = None

@app.put("/store/{key}")

def put_key(key: str, req: PutRequest):

store.put(key, req.value, req.ttl_seconds)

return {"status": "ok", "key": key}

@app.get("/store/{key}")

def get_key(key: str):

value = store.get(key)

if value is None:

raise HTTPException(404, "Key not found or expired")

return {"key": key, "value": value}

@app.delete("/store/{key}")

def delete_key(key: str):

if not store.delete(key):

raise HTTPException(404, "Key not found")

return {"status": "deleted"}

@app.get("/store")

def list_keys():

return {"keys": store.keys()}

Run it with uvicorn api:app --reload, and now you can test with curl:

curl -X PUT localhost:8000/store/name \

-d '{"value":"sharon","ttl_seconds":60}' \

-H "Content-Type: application/json"

curl localhost:8000/store/name

# {"key":"name","value":"sharon"}

In system design interviews, being able to describe this layered architecture — class → API → client — demonstrates that you think in terms of real systems, not just algorithms.

How to Explain This in an Interview (The 5-Minute Script)

Here’s exactly how to walk through this when an interviewer says “Design a key-value store”:

“I’d start with an in-memory hash map for O(1) reads and writes — in Python, that’s a dict.

For TTL support, I’d store each value alongside an expiry timestamp. On every GET, I’d check the timestamp and lazily evict expired keys. This avoids background threads for the basic case.

For the API layer, I’d use FastAPI with PUT, GET, and DELETE endpoints mapping directly to the store’s methods. Pydantic handles request validation.

The tradeoff right now is durability — everything is in memory. If the process restarts, all data is lost. To fix that, I’d add a write-ahead log: append every PUT and DELETE to a file. On restart, replay the log to rebuild state. This is exactly what Redis does with AOF persistence.

For production, I’d also consider: snapshots for faster recovery, background compaction to keep the log from growing unbounded, and replication for high availability.”

This answer covers data structure choice, algorithm design, API architecture, persistence strategy, and production concerns — all in under 2 minutes. That’s a strong hire signal.

Your DevOps Advantage: What You Already Know

If you’re a DevOps engineer reading this, you’ve been working with these concepts every day without realizing they’re “interview material”:

You’re not learning new concepts. You’re learning to name them and code them in Python.

Challenge: Try It Yourself

Extend the key-value store with these features (solutions in next week’s issue):

Active expiration: Add a background thread that periodically scans and removes expired keys. Hint: use

threading.Threadwithdaemon=True.Max capacity: Add a

max_keysparameter. When the store is full, reject new keys with an error. (Bonus: instead of rejecting, evict the oldest key — that’s an LRU cache, which we’ll build in Issue 3!)Key patterns: Implement a

search(pattern)method that returns all keys matching a glob pattern likesession:*. Hint:fnmatch.fnmatch().

Next week in Crack That Weekly: Redis Persistence in Python — Write-Ahead Logs & Snapshots. We’ll make our key-value store survive restarts using the exact same techniques Redis uses under the hood.

If this helped you see the connection between your DevOps work and coding interviews, share it with a fellow engineer who’s preparing for interviews.

About Crack That Weekly: A weekly newsletter that bridges the gap between real-world DevOps experience and coding interview prep. Every issue builds a real system from scratch in Python, connecting design patterns, data structures, and algorithms to tools you already use.

Written by Sharon Sahadevan, a DevOps engineer who got tired of interview prep that ignores operational experience.