Why Redis Uses C, Not Python: The GIL Explained

Your MiniRedis has a hidden bottleneck. Understanding it is the difference between a junior and senior answer in system design interviews.

We just spent three issues building a Redis clone in Python. It works. It handles TTL, persistence, and active expiration. But there is an elephant in the room.

Our MiniRedis cannot serve 100 clients at full speed. Not because of our code. Because of Python itself.

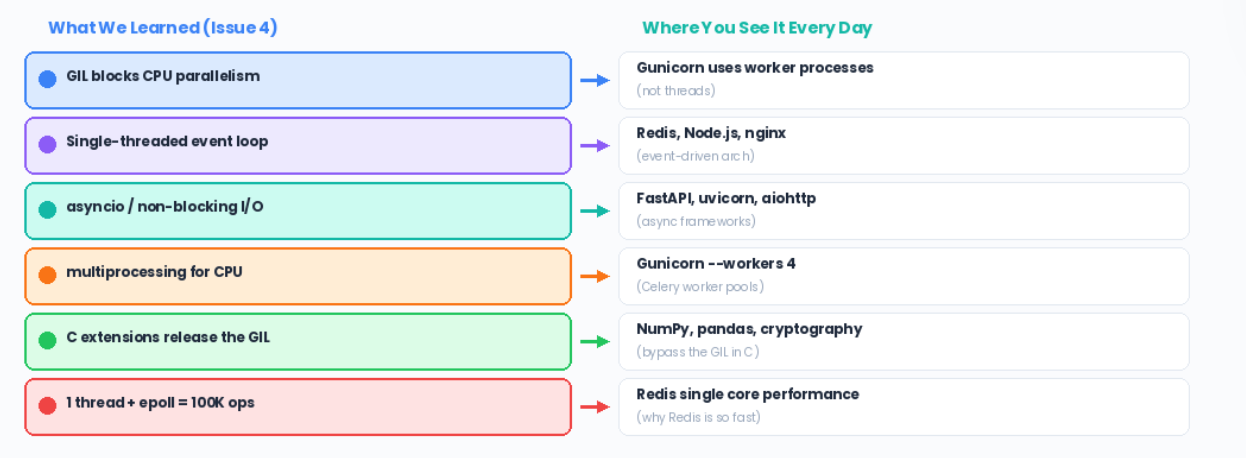

Today, we crack open the GIL, Python’s Global Interpreter Lock, and understand why Redis chose C, why your Python services need Gunicorn workers, and how to give the senior-level answer when an interviewer asks about concurrency.

The 3-Question Framework

What does the system DO? It serves thousands of concurrent clients doing GET/SET operations. This is the Reactor pattern, a single event loop dispatches work without spawning threads per connection.

What operations must be FAST? Everything. Every GET and SET must complete in microseconds. The bottleneck is not the data structure (a dict is O(1)), but how we handle multiple clients at the same time.

What is the core LOGIC? Two competing models:

Multi-threaded: One thread per client. Simple to write, but Python’s GIL serializes CPU work anyway.

Event loop: One thread, non-blocking I/O. No GIL contention. This is what Redis actually does.

Pattern: Reactor (single event loop dispatches I/O)

Structure: Event queue + file descriptor table

Trade-off: Threads (simple but GIL-bound) vs Event loop (fast but complex)

What Is the GIL, Actually?

The Global Interpreter Lock is a mutex inside CPython (the standard Python interpreter). It allows only one thread to execute Python bytecode at a time, even on a 64-core machine.

Here is the mental model:

This is not a bug. It is a design decision. The GIL makes CPython’s memory management (reference counting) thread-safe without fine-grained locking.

The trade-off: simpler internals, but no true CPU parallelism from threads.

Why This Kills Our MiniRedis

Remember our active expiration from Issue 3? We used a background thread:

# From Issue 3 - our background cleanup thread

def _start_cleanup(self):

def run():

while True:

time.sleep(self._cleanup_interval)

self._cleanup_expired() # <-- needs the GIL

t = threading.Thread(target=run, daemon=True)

t.start()

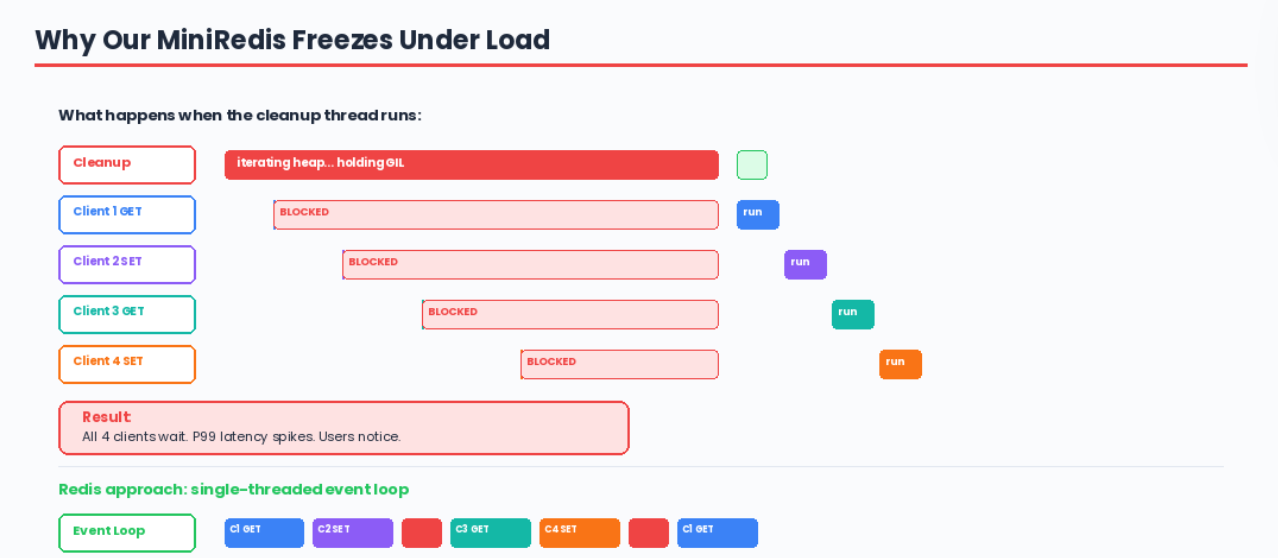

When this cleanup thread runs, every client request is blocked. Not because of our lock, because of the GIL. The cleanup thread holds the GIL while iterating the heap, and no other thread can execute Python code until it releases it.

With 100 concurrent clients and a cleanup cycle running, you get:

This is why Redis uses C. In C, there is no GIL. Threads can truly run in parallel on multiple cores. But Redis goes further;

It does not even use multiple threads for the main workload. It uses something better.

How Redis Actually Works: The Event Loop

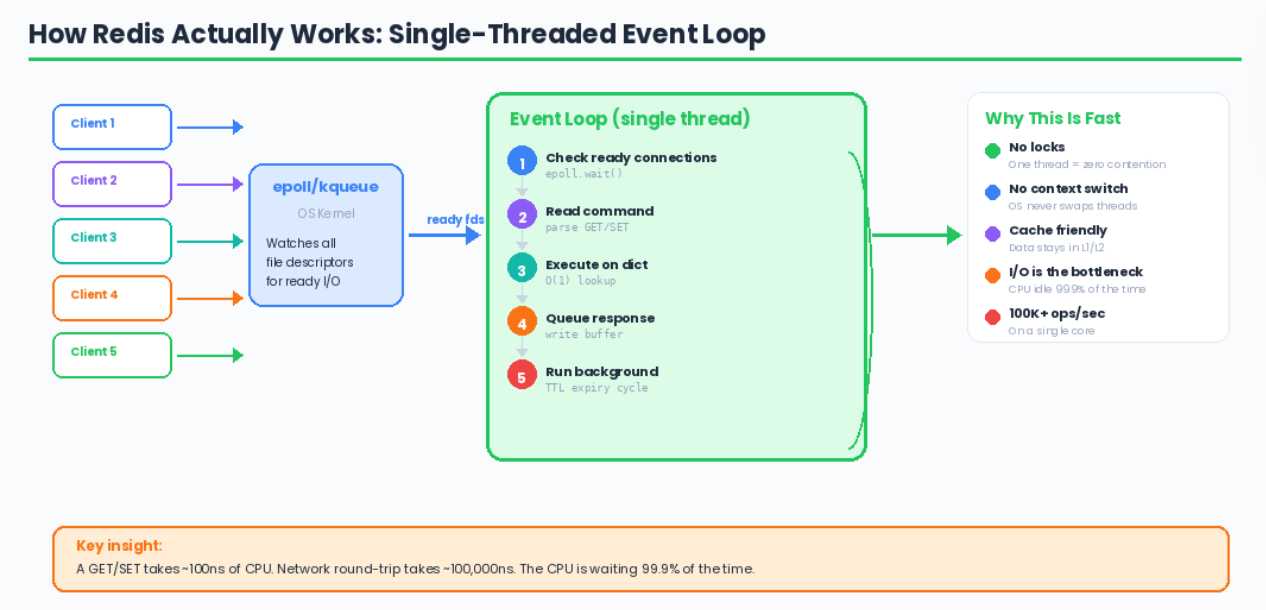

Redis uses a single-threaded event loop based on epoll/kqueue. One thread. No locks. No context switching. No GIL.

Why is this fast?

No lock contention. One thread means no locks needed. Zero overhead.

No context switching. The OS does not swap threads in and out. Pure CPU time.

CPU cache friendly. One thread keeps data in the L1/L2 cache. Threads thrash the cache.

I/O is the bottleneck, not CPU. A GET/SET in memory takes ~100ns. Network I/O takes ~100us. The CPU is idle 99.9% of the time, waiting for network packets. One thread is enough.

The Python Concurrency Landscape

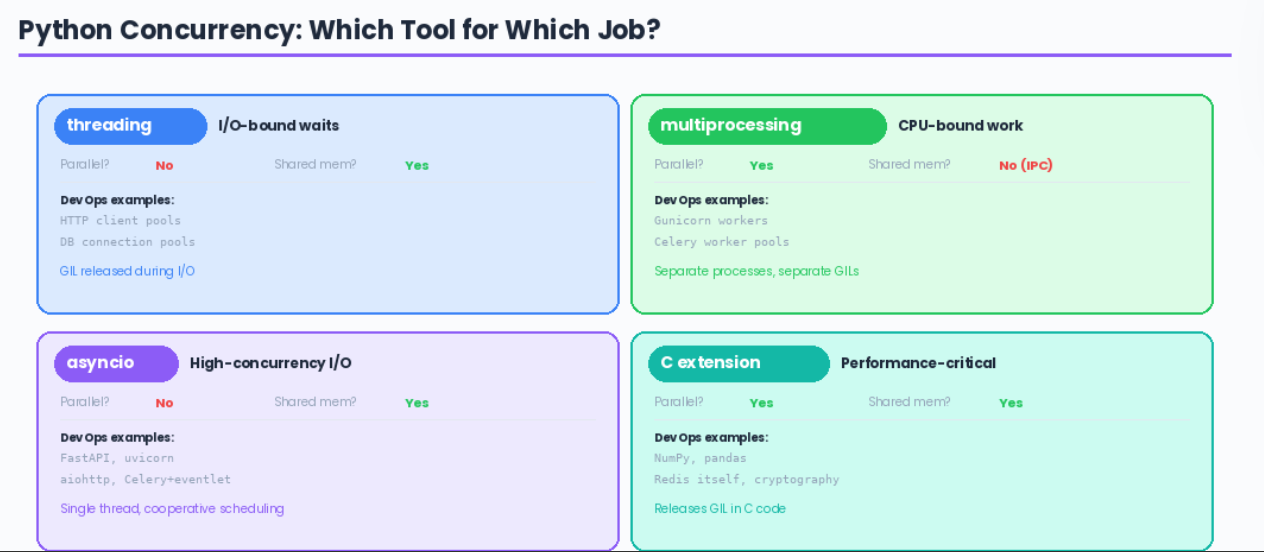

So if threads do not work for CPU-bound Python, what are the options?

The interview-level insight: Python threads are only useful when you are waiting for I/O (network calls, disk reads).

The GIL is released during I/O waits, so threads can overlap their waiting time. But for CPU work like iterating a heap or computing a hash, threads give you zero speedup.

Building a Better MiniRedis: asyncio Version

Here is what our MiniRedis would look like using asyncio instead of threads — the same pattern Redis uses, just in Python:

import asyncio

import time

class AsyncMiniRedis:

def __init__(self):

self._store = {}

async def handle_client(self, reader, writer):

while True:

data = await reader.readline()

if not data:

break

command = data.decode().strip().split()

response = self._execute(command)

writer.write(f"{response}\n".encode())

await writer.drain()

writer.close()

def _execute(self, command):

cmd = command[0].upper()

if cmd == "GET":

entry = self._store.get(command[1])

if entry is None:

return "(nil)"

if entry["expiry"] and time.time() > entry["expiry"]:

del self._store[command[1]]

return "(nil)"

return entry["value"]

elif cmd == "SET":

ttl = None

if len(command) > 3 and command[3].upper() == "EX":

ttl = time.time() + int(command[4])

self._store[command[1]] = {"value": command[2], "expiry": ttl}

return "OK"

return "ERR unknown command"

async def start(self, host="0.0.0.0", port=6380):

server = await asyncio.start_server(

self.handle_client, host, port

)

# Schedule background expiry

asyncio.create_task(self._expire_cycle())

async with server:

await server.serve_forever()

async def _expire_cycle(self):

"""Non-blocking expiry cycle - never blocks the event loop."""

while True:

await asyncio.sleep(0.1)

now = time.time()

expired = [

k for k, v in list(self._store.items())

if v["expiry"] and v["expiry"] <= now

]

for k in expired:

self._store.pop(k, None)

No threads. No locks. No GIL contention. The await points are where the event loop can switch to another client. The _expire_cycle cooperatively yields control so client requests are never blocked.

The DevOps Connection

Interview Walkthrough Script

When the interviewer asks, “Why doesn’t Redis use Python?” or “Explain the GIL”:

“The GIL is a mutex in CPython that allows only one thread to execute Python bytecode at a time.

This means Python threads cannot achieve true CPU parallelism; they are only useful for overlapping I/O waits. Redis needs microsecond latency for every operation, so it uses C with a single-threaded event loop based on epoll.

One thread, no locks, no context switching. The CPU work per command is tiny; it is I/O bound, so a single thread handling thousands of file descriptors via epoll is more efficient than thousands of threads fighting for the GIL.

In Python, the equivalent architecture is asyncio.”

Challenge: Try It Yourself

Benchmark threads vs asyncio. Spin up 100 concurrent clients hitting your MiniRedis. Compare latency with the threaded version (Issue 3) vs the asyncio version above.

Add pipelining. Real Redis clients send multiple commands in one TCP write. Modify

handle_clientto parse and execute batched commands.Implement pub/sub. Add SUBSCRIBE and PUBLISH commands using asyncio — this is where event loops truly shine.

Next week: Build a Rate Limiter From Scratch in Python

Previous: TTL & Key Expiry | Redis Persistence | Build Your Own Redis